According to WSJ, an average business uses more than 129 applications. This number is rising on an annual basis. In 2015, an average business used only 53 applications, in 2017, the number was 73, and now it has increased to 129 applications. In the coming years, businesses will be using even more applications because they are now readily available through cloud platforms.

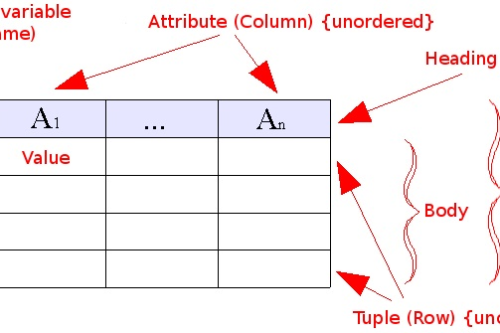

With 129 applications, we have 129 different data sources to extract and migrate data from. Not all these applications offer inbuilt integrations such as SalesForce, Hubspot, PowerBI, and others. Some of these data sources will have no APIs, to begin with. In that case, the data can only be extracted from a database or is exported from the source application as a JSON or CSV file. Integrating this data with the data warehouse is a problem of its own.

In this article, we will discuss various approaches to data integration and how they can be helpful for your business. So, let’s get started.

Best Data Integration Approaches for Business

The end goal of a data integration job is to move data from the source to destination while ensuring that it is unified, error-free, and compatible for OLAP. This goal can be achieved in many ways, but it is important to know which approach will offer the most value and will take the least amount of time.

Let’s learn about different data integration approaches.

Extract, Transform, and Load (ETL)

For integrating data on a large scale, ETL is the most feasible option available. ETL has been around for decades and is one of the most commonly used methods to extract, cleanse, and consolidate data in a data warehouse.

ETL software can extract your desired data from a source system, transform it to your desired format, and then move it to the destination system. The process is mostly automated and is guided by data maps to get completed. These data maps allow users to automate dataflows.

Modern ETL software has made codeless ETL processing easier. Instead of spending days creating connectors between applications, ETL teams can now create data maps and automate their jobs within minutes.

Extract, Load, and Transform (ELT)

Like ETL, another approach to data integration is the ELT or pushdown optimization approach. In this approach, instead of transforming the data at a staging area, it is directly loaded onto a destination data warehouse. Transformation is the final step in the ELT process.

ELT approach is mostly used for streaming data instead of batch processing. It is faster and requires fewer resources. Moreover, it is used for transforming chunks of data instead of bulk data at once which makes it a great choice for financial, medical, and engineering sectors.

Import/Export Data

Importing and exporting data is a simple approach to data integration. In this approach, data is exported from the main data source usually in the form of a CSV or an excel file and then loaded on to the destination.

For example, you have data available in your CRM and you want to move that to a central repository. You can manually extract data from each list of the CRM and load it to your central repository.

There are two problems with the import/export data integration approach.

- It doesn’t allow any transformations. All data is manually added to the central repository. If you want to make any changes to the data, you will have to edit the files manually. This will take a lot of your time.

- The process is slow. If you manually export lists from the CRM, it may take days to complete the whole process. Loading the data in the data warehouse in a compatible format is an even bigger issue.

Point to Point

Point to point is another data integration approach that is now defunct. In the early days of computing, point to point approach was a feasible option because there were only 2 or 3 applications used in an organization. This meant that all applications could relate to each other using point to point connectivity.

For example, A to B and B to C and then C to A. All these applications could connect with three points. However, organizations today use over 120 applications on a regular basis.

So, the number of points needed for connectivity for these applications can be calculated using the bi-directional point to point integration formula.

(n*(n-1))/2

Here ‘n’ denotes the number of applications an organization uses. In our case, the applications are 120. So, there will be 7160 points needed for data integration between each application. This is not feasible anymore.

Data Virtualization

The data virtualization (DV) data consolidation approach is getting popular because it eliminates the need for physical data movement. DV allows the virtual layer of data to be shown at the destination. Users can run queries on the virtual layer, consolidate and unify data, create transformations, and use it for business insights.

The data at the source remains untouched during the whole process. DV is a lot faster, error-free, and makes data integration near real-time. Since it is a data layer that offers transformation. It can be directly used instead of the data warehouse for OLAP and data visualizations.

Application-Specific Integration

If you want to locate, retrieve, clean, and integrate data from a single source then an application-specific integration approach is used. This type of integration makes it easy for data to move from one source to the other. The approach is also called ‘application integration’. Organizations can choose what type of applications they want to connect and in what way.

Application integration is a lot faster, allows complete information exchange between two applications, but it is limited to only a small number of applications that are integrated.

Middleware Integration

Middleware allows the transfer of data between applications and from applications to the databases. It’s useful for integrating legacy systems with modern ones. Middleware acts as an interpreter between these systems and makes the data compatible while it is moved from the legacy system to the modern system.

The middleware data integration approach offers easier access to legacy systems. It connects to them automatically for data streaming and batch processing.

However, the middleware layer needs to be deployed by a developer and requires maintenance from time to time.

Which of these data integration approaches is best for business?

Today, organizations store data on multiple business environments including on-premise, in the cloud, and hybrid. Since this data is scattered across multiple business scenarios, finding the best approach for consolidating data depends on many factors such as data integration budget, business infrastructure, and human resources.

To give you some guidance, we’ve outlined the best scenario for each approach:

| Data integration approaches | When to use them |

| ETL | For consolidating data from multiple business units. Supports both on-premise and cloud |

| ELT | For streaming data in real-time |

| Application-based integration | For integrating a few applications to the data warehouse |

| Point to point | Connecting a small number of applications |

| Middleware Integration | When legacy systems need to be connected with modern systems or to a data warehouse |

How Astera Centerprise Helps with All these Data Integration Approaches?

Astera Centerprise allows its users to easily consolidate data on a data warehouse through the use of data connectors. It offers all data integration approaches including ETL, ELT, middleware integration, and point to point application integration depending on user requirements.

Users can easily create data maps, run dataflows, schedule, and automate jobs. Astera offers data virtualization, real-time streaming, and batch processing as well.

Learn more about how Astera Centerprise can help gain relevant business insights.